Agent Managers at 200 People: What HBR’s Latest Concept Means for Growth-Stage Leaders

Last month, your VP of Marketing subscribed to an AI writing assistant. Your VP of Engineering approved three Copilot licenses. Your head of customer success started routing ticket triage through an AI agent that she configured herself on a Sunday afternoon.

Nobody told you about any of it until the Monday standup.

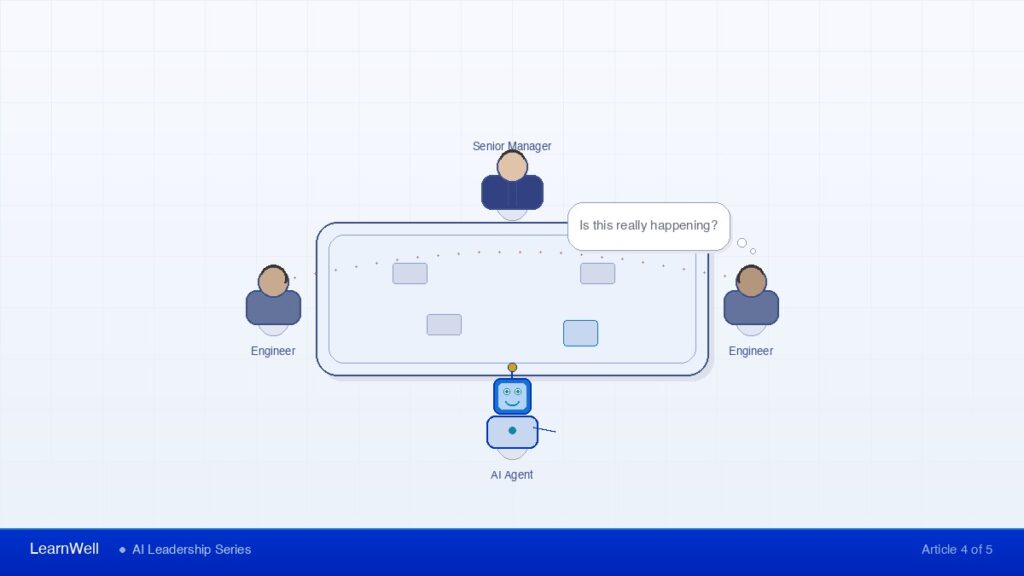

If you are running a 200-person company and that scenario sounds familiar, you have just bumped into the question that Harvard Business Review surfaced in February: Who manages the AI agents?

HBR coined the term “agent manager” and framed it as a new role — someone whose job is to oversee AI agents the way a people manager oversees direct reports. It is a useful concept. It was also written for the Fortune 500.

Here is what it means for your 200-person company, where you do not have the budget for a new role and your org chart is already stretched.

The Problem Is Not a Missing Role. It Is a Missing Competency.

At a 5,000-person enterprise, you can hire an “agent manager.” You can create a Center of Excellence. You can fund a cross-functional AI governance team.

At 200 people, you cannot. Your VPs already carry a manager-to-employee ratio of 1:7 to 1:10. Your middle managers — 58% of whom received no formal management training — are now adding AI tools to their workflows without a framework for how those tools fit into the decision-making structure of the company.

200-Employee Wall — the growth stage where informal coordination breaks down and the systems that got you to 50 people no longer scale. AI agents do not just hit this wall — they accelerate you into it, because growth-stage companies adopt tools faster than they adopt governance.

This is the distinction HBR missed: at your scale, agent management is not a new hire. It is a new competency for existing leaders. And it is arriving before most of those leaders have finished learning how to manage people.

Gartner projects that 40% of enterprise applications will include AI agents by the end of 2026, up from 5% in 2025. That is not a trend line. That is a compression wave. And it will hit your company faster than you expect, because growth-stage companies adopt tools faster than they adopt governance.

The Decision Rights Question Changes

I have spent 20 years working with tech company leaders, and I have seen more than 125 leadership teams navigate the transition from startup coordination to scaled infrastructure. That work produced a framework I call the Decision Rights Map — an explicit document that answers three questions for every recurring decision: Who owns it? At what threshold does it escalate? Who needs to know the outcome?

Decision Rights Map — an explicit document that answers three questions for every recurring decision: Who owns it? At what threshold does it escalate? Who needs to know the outcome? When AI agents enter the picture, a fourth question is required: What decides — and under what conditions does a human override the output before it reaches the team?

When your VP of Engineering uses an AI agent to triage incoming bug reports, prioritize the backlog, and draft sprint goals, the Decision Rights Map needs a new column. Not just “who decides” but “what decides” — and under what conditions does a human override the output before it reaches the team.

Case Study: Marcus, CTO, 230-Employee Infrastructure Company

Marcus’s engineering leads started using AI agents to auto-assign Jira tickets based on historical velocity data. On paper, it was a productivity win. In practice, the agent was making resource allocation decisions that used to require a conversation between two directors. Neither director realized the agent was doing it. The engineers assumed the directors had approved the new workflow. The directors assumed engineering was still triaging manually.

The cost: Within six weeks, two projects were overstaffed and one critical integration was three sprints behind schedule. The problem was not the AI tool — it was the invisible reallocation of decision authority to a system with no escalation protocol, no ownership threshold, and no way to signal when it exceeded its mandate.

Three Delegation Archetypes You Will Recognize (Adapted for AI)

Over the years, I have identified four delegation archetypes that keep managers stuck. Three of them get worse when AI enters the picture.

The Telepath. This manager delegates to AI without context and wonders why outputs disappoint. They paste a vague prompt into a tool, get a mediocre result, and conclude that AI “doesn’t work for our use case.” The problem is not the AI. The problem is that they delegate to AI the same way they delegate to their team — without clear parameters, success criteria, or constraints.

What to do instead: Treat every AI agent like a new hire on day one. Specify the inputs, define what a good output looks like, and set the boundary where the agent stops and a human reviews.

The Vampire. This manager lets AI do the work but keeps all the decisions. Every AI-generated report gets manually reviewed, edited, and rewritten before it reaches the team. The productivity gain from the AI tool is consumed entirely by the manager’s refusal to trust the output. What looked like a time-saver becomes a time-tax.

What to do instead: Define an accuracy threshold. If the agent’s output meets criteria 80% of the time, approve it for distribution without manual review. Audit weekly instead of editing daily.

The Bureaucrat. This manager wraps every AI tool in so many approval layers that the team would move faster without the tool. Three people must sign off before the AI-generated customer response goes out. The AI drafts a project plan, but it requires a committee review before anyone can act on it. The control structure is more expensive than the manual process it replaced.

What to do instead: Apply your Decision Rights Map. If the output falls within an existing decision threshold (under $50K impact, within departmental scope, reversible within 48 hours), give the agent the same autonomy you would give the human who used to own that task.

| Archetype | The Pattern | The Cost | The Fix |

|---|---|---|---|

| The Telepath | Delegates to AI without context or constraints | Mediocre outputs lead to “AI doesn’t work” conclusion | Treat every AI agent like a new hire: specify inputs, define good output, set review boundaries |

| The Vampire | Lets AI work but manually rewrites everything | Productivity gain consumed by refusal to trust output | Set 80% accuracy threshold; audit weekly instead of editing daily |

| The Bureaucrat | Wraps AI tools in excessive approval layers | Control structure costs more than the manual process it replaced | Apply Decision Rights Map: same autonomy as the human who owned the task |

Three Things to Do This Quarter

If you are leading a company between 150 and 300 people and AI agents are starting to show up in your workflows, here are three moves to make before the end of Q2.

1. Extend your Decision Rights Map to include AI agents as decision participants.

Take every recurring decision in your map and ask: Is an AI agent now influencing or making this decision? If yes, document it. Specify who owns the agent’s configuration. Define the threshold at which its output requires human review. Make the agent’s role as visible as any team member’s.

If you do not have a Decision Rights Map, build one. The framework answers three questions for every recurring decision: Who owns it? At what threshold does it escalate? Who needs to know the outcome? Start with your top 10 cross-functional decisions. The process takes a half-day with your leadership team and saves 10-15 hours per week in escalation overhead within the first quarter.

2. Audit where AI agents are already making decisions you have not explicitly authorized.

This is the Marcus problem. Your people are adopting AI tools faster than your governance can track. Run a simple audit: ask each department head to list every AI tool in active use, what decisions it influences, and whether anyone formally approved its role in the workflow.

Most CEOs I work with discover three to five AI agents making operational decisions that nobody put on a decision rights map. McKinsey found that 88% of organizations use AI in at least one function. The question is not whether your company uses AI. The question is whether you know where it is making decisions on your behalf.

3. Add “agent management” to your VP development agenda, not your hiring plan.

The manager-to-employee ratio in high-performing tech companies runs 1:7 to 1:10. Your VPs are already stretched. Adding a new role is not realistic at your stage. What is realistic: building agent management into your existing leadership development program.

This means training your VPs to write clear briefs for AI tools (fixing the Telepath problem), define accuracy thresholds (fixing the Vampire problem), and set appropriate autonomy levels (fixing the Bureaucrat problem). These are the same delegation skills they need for managing people — applied to a new kind of team member.

The Competency That Separates the Next Stage

HBR is right that agent management matters. The gap in their analysis is audience. At a Fortune 500 company, you hire for it. At a 200-person company, you build it into your existing leaders.

The companies that will navigate this well are the ones whose leaders already have clear decision rights, clean delegation habits, and the infrastructure to absorb new complexity without creating new bottlenecks. The companies that will struggle are the ones that are still running on the coordination model that got them to 50 people.

If you are not sure where your leadership team stands on decision infrastructure, that is the first question to answer.

I run a 30-minute discovery call where we map your current decision rights structure and identify the specific gaps that AI agents will expose as they become part of your workflow. No pitch deck, no slide show — just a diagnostic conversation about where your infrastructure stands and what needs to change before the compression wave hits.

Related Articles

- Decision Velocity When AI Skills Are Uneven — The new decision bottleneck AI creates when fluency is uneven

- AI Fluency for Leadership Teams — Four levels of team AI fluency your VPs need before managing agents

- Beyond 1:1 Coaching — Building the collective capability agents require

- The Alignment Tax is Unforgiving — The hidden cost that compounds when agents make untracked decisions